The Missing Stars in NASA Photos (It’s Not What You Think) - Artemis II Photos Explained

Introduction: The “Missing Stars” Mystery

If you’ve ever looked at photos of the Moon, especially recent images from the Artemis II mission, you may have noticed something strange: where are all the stars? It’s space, after all. Shouldn’t the sky be filled with millions of stars? Naturally, the internet has opinions. Some claim the photos are fake, others joke that NASA “forgot to add the stars,” and a few go even further down the rabbit hole (aliens hiding the stars, anyone?). But the real explanation is far less dramatic and far more interesting. It all comes down to photography. In this post, I’m going to break it down using my 20+ years of experience working as photographer. Buckle up, we’re about to take off on a photographic journey that explains exactly what’s going on.

Table of Contents

Below are some images from the Artemis II Lunar Flyby. Here is a link to the NASA webpage with more images so you can look at the all the photos in the gallery: https://www.nasa.gov/gallery/lunar-flyby/

The Moon Is Blindingly Bright (Yes, Really)

Here’s the first thing most people underestimate, the Moon is actually incredibly bright. Not because it emits light but because it reflects sunlight. And sunlight in space is intense, unfiltered by Earth’s atmosphere. I mean the sun is literally the brightest thing in the solar system and that light is reflecting off the moon at the astronauts who are taking these pictures. Now for you photographers, you all know what light falloff is. As you move farther away from a light source (the moon) the dimmer it appears and the closer you are (to the moon) the brighter it appears. So as the astronauts are are shooting the moon from close range the moon, to them, would be a lot brighter than it appears to us here on earth.

📸 Light falloff

Light falloff is the way light rapidly decreases in intensity as it moves away from the source. In photography terms: The closer your subject is to the light, the brighter it is, and the faster it gets darker as distance increases. This is why moving a light just a little closer or farther can dramatically change exposure and contrast.

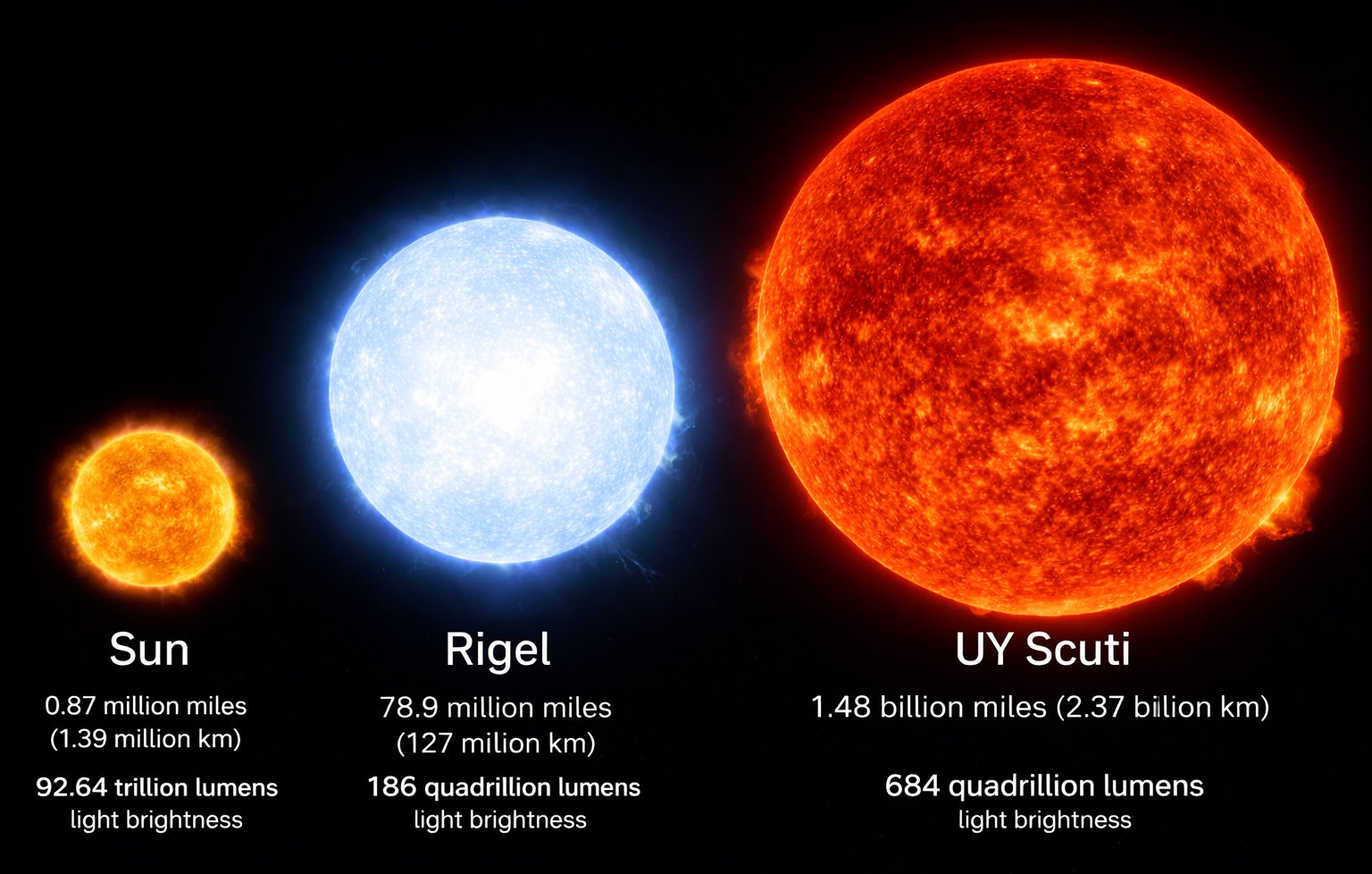

A visual comparison of the Sun, blue supergiant Rigel, and hypergiant UY Scuti, showing their size differences and luminosity.

Meanwhile the stars? Yes ok, well some of them might be Blue supergiants (like Rigel or Deneb) or Hypergiants (like UY Scuti) and be a quadrillion times brighter than our Sun but because of light falloff over distance they’re ridiculously dim by comparison. Anyway, i’m getting off topic I love this stuff.

So right away, you’ve got a massive dynamic range problem because of the difference in relative brightness. Not to mention NASA, for some reason, is shooting with Nikon D5 cameras which are 10 year old cameras at this time. Released back in 2016 sensor technology and dynamic range has improved quite a bit since then and it’s a bit of a head scratcher as to why they are using outdated tech? Anyway back to the dynamic range issue.

Bright Moon = tons of light

Stars = barely any light

Your camera can only expose for one at a time. Either you expose for the Moon or the distant stars but you can’t expose for both at the same time. Modern mirrorless cameras are much better at capturing dynamic range than older systems. For example, the Canon R5 Mark II includes features like Highlight Tone Priority, which help preserve detail in bright areas of an image. It’s also worth noting that cameras with larger sensors, such as medium format systems like the Fujifilm GFX100S typically offer greater dynamic range than full-frame cameras like the Nikon D5. They also capture more fine detail which is a bonus for scientific study and generally perform better in low-light situations due to their larger sensor size. That said, space photography introduces unique challenges, and there may be advantages to cameras like the Nikon D5 that aren’t immediately obvious. If I come across anything interesting in my research, I’ll be sure to cover it in a future post.

* Update: I did some Interwebs digging and this is what I found out about NASA and the D5

Exposure 101: Why Cameras Can’t See Everything at Once

🧠 Cameras don’t work like human eyes. They can’t magically balance extreme differences in brightness. They can only expose for one light value at a time. Which makes me wonder: How did the view of the moon with a stars behind it really look to the astronauts peering out the capsule window. It must of been an awe inspiring view.

If you expose correctly for the Moon:

The Moon looks sharp and detailed

The sky goes completely black

Stars disappear

If you expose for the stars:

Stars become visible

The Moon turns into a blown-out white blob

There is no single exposure that cleanly captures both. But with that said computational photography in smartphones has kind of paved the way to a solution. If a company was able to create a full frame camera that was able to take several photos at once at different exposure values and computationally stitch them together that would be pretty amazing for space photography and an incredibly amazing marketing opportunity for any camera brand that came out with such a camera. *caugh Canon do it! I cameras have HDR photo mode but the tech is still in its infancy on full frame cameras.

Shutter Speed vs. Stars: The Real Trade-Off

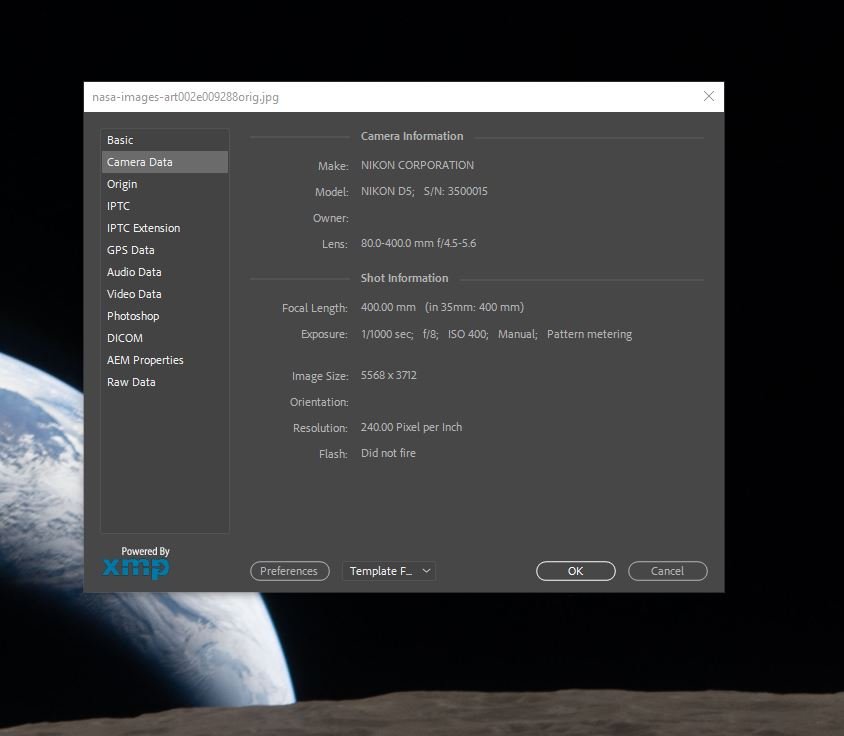

Here’s where things get even more interesting. To capture a sharp image of the Moon from a moving spacecraft, you need a fast shutter speed. And we’re not talking fast we’re talking very fast. From what I heard on NASA’s live YouTube stream the astronauts were shooting with an 80-400mm zoom lens. When it comes to photography, telephoto lenses and sharp images the golden rule is: you always want to make sure that your shutter speed is double your focal length. So at 400mm you’d want your shutter speed to be at 1/800s at minimum. Now that is assuming you can hold your camera steady with your feet planted firmly on the ground. I can only imagine how difficult that must be floating around in zero gravity. Oh and if holding steady wasn’t hard enough the astronauts were approaching the moon at a speed of 2,053 km/h (1,276 mph) meaning you’d need an even faster shutter speed to capture crisp images. If I was hired to shoot pictures of the moon in zero-g with an 400mm lens I’d have my shutter speed at 1/2000s of a second at minimum, 1/4000s if possible to ensure sharp shots.

Now here’s the problem, If any of you have ever dabbled in astrophotography you’ll know all to well that in order to capture photos of stars you need a sturdy tripod and a long exposure measured in seconds not 1 1000th of a second. Distant Stars need long exposures to be visible in images. Astrophotography typically uses:

5 seconds

10 seconds

20+ seconds

Why? Because stars are dim, and you need time to collect their light. So what does this mean in the context of the Artemis II images?

Fast shutter → Moon looks sharp and properly exposed, stars vanish

Slow shutter → Stars appear, Moon becomes overexposed and blurry + stars are blurry due to camera shake.

You physically cannot do both at once in a single shot under those conditions.

NASA image exif data

📸 Just for fun I checked to see if any of the images from the NASA webpage had any exif data embedded in them. All the images had their exif data removed but one. It still had it’s exif data and was full resolution. Below is a screenshot of the exif data. You can see the astronaut was shooting at only 1/1000s at 400mm and 400 ISO. In my personal opinion the shutter speed was too slow to capture a sharp image.

The Role of ISO (And Why It Doesn’t Save the Day)

You might think “Just crank the ISO and bring out the stars!”. . . But that won’t work. Higher ISO does make the sensor more sensitive to light but it amplifies everything, including the Moon. So instead of solving the problem, it actually makes it worse:

The Moon gets even brighter (blown out)

The stars are still too dim relative to it the light of the moon

You lose sharpens because of the higher ISO

ISO doesn’t fix dynamic range, it just shifts the problem.

In a photography context, capturing both the Moon and the stars with proper exposure would require reducing the Sun’s intensity so that the light reflecting off the Moon is closer in brightness to the faint light from distant stars. This kind of light balancing is common in studio settings, but it’s obviously not something you can realistically do in space.

Why One Photo Does Show Stars

Now here’s the fun part. One of the images actually does show stars. Why? Because:

The Moon isn’t directly lit in the same way

The exposure is balanced differently

The bright subject (Moon surface) isn’t dominating the frame

This allows:

Longer exposure

More light from stars reaching the sensor

In other words: Different exposure strategy = different result. However, if you look closely at the stars in the image you’ll notice they are all elongated meaning that the photographer had to drag the shutter a bit (longer exposure) to get the shot. It’s kind of cool to look at these images and understand them from an exposure context.

Could You Ever Capture Both the Moon and Stars?

This is an AI generated image of a view of the Moon’s cratered surface from lunar orbit, with a small, distant Earth floating in the background against a dense, star-filled sky. I can’t help but wonder if this is what it could really look like from lunar orbit to the naked eye.

Technically? Yes you could capture both. Practically? Not really, at least not in a single shot from a spacecraft. You’d need:

Multiple exposures

HDR blending

Compositing or computational photography

Photographers on Earth do this all the time:

One exposure for the Moon

One for the stars

Blend in post

But a documentary-style mission photo? That’s not the goal.

The Conspiracy Angle (Let’s Talk About It)

Let’s address it head-on. “If there are no stars, the photos must be fake.” This sounds logical right, until you understand exposure. The same thing happens on Earth:

Take a photo of a city at night with proper exposure

The sky often looks completely black

Stars vanish

Are your night photos fake too? Below is a photo I took of the Moon (from Earth because I haven’t had time to fix my space ship yet) The photo shows the Moon brightly lit in the middle of the frame. Obviously there are stars in the sky but they are not visible because the brightness of the moon outshines the dimly lit stars.

Photo of the Moon shot with the TTArtisan 500mm f/6.3 lens showing a night sky with no stars

The reality is, cameras are limited tools. Not magic truth machines and ironically, the lack of stars is actually evidence the photos are real, because:

The exposure behaves exactly as physics predicts

The lighting matches real-world conditions

If NASA did add stars artificially, they’d probably get criticized for that too. However, leaning back into the conspiracy theory side of things. Here’s a little astronaut food for thought: If all the Moon missions are indeed fake than NASA would never want you seeing the stars in any photos. Stars are like a map and with a picture of the stars the exact location of the photo could be triangulated. If the stars don’t match the flight path of the Moon mission than that would certainly start trending on the interwebs.

However, I have to admit, to my trained eye, there are some fishy things that I noticed in these photos which make me wonder if these images are real. But before I get into that I also have to say I have never shot photos in space. I have no experience shooting moons with no atmosphere so this is all conjecture. Below is the image shot at 400mm f/8 let’s talk about it.

Things to think about

The astronauts are shooting through thick glass windows designed to withstand reentry (if these images are real) so images won’t be altra sharp and there could be strange wonky distortions.

Anybody who has ever shot with a telephoto lens knows that at 400mm even at f/8 the depth of field is relatively shallow. To keep it simple background blur sets in pretty quickly at 400mm. Now take a look at the full sized image. Below the Earth on the Moon’s surface you’ll notice a crater with sharp edges at the 2 o’clock position. The edges of that crator are the sharpest edges on the whole image. I think it’s safe to assume that the camera was focused on that spot. In my experience with telephoto lenses that is usually a noticeable depth of field line. Everything in the focus plane depth is sharp and as you move away from the focus plane things get increasingly more blurry. I don’t see that phenomenon in this image. Again it could be due to the thick window glass bending light in a funny way.

The Moon’s horizon or I guess in this case the outside edge of the Moon looks surprisingly consistent in sharpness to me. It’s the same level of blur all the way from edge to edge. In my opinion it should be sharpese in the middle and becoming increasingly more blurry as you move towards the edges. Zoom into the image at 100% and take a look for yourself. It looks suspect to me but than again the Moon has no atmosphere so there is nothing to scatter the light. The lens is also being shot at F/8 which is most likely it’s sweet spot for edge to edge sharpness. It looks off to me but I have no visual reference to compare it to.

Now let’s talk about exposure. When you’re on the Earth and you shoot a landscape with the Moon in the background the moon usually comes out looking like a white glowing ball. It’s really hard to expose both the Moon and the landscape in front of you at the same time. The Moon is reflecting too much light. Now let’s reverse the situation. In this image we’re looking at a picture of a Moon landscape with the Earth in the background. The earth is a bigger object covered in clouds reflecting way more light than the Moon ever could. It’s a bigger brighter object in the sky. Again I’ve never shot pictures from the Moon so I don’t know but I am curious as to why the Earth isn’t blown out? It could possibly be that the exposure reading where taken of the Earth to ensure it’s exposed properly and then the brightness of the Moon was adjusted in post.

These are just thoughts, it’s hard to definitively say real or fake. Arguments can be made for both sides of the coin. I wish NASA did a better job of showing the Earth and the Moon. The D5 is capable of shooting 4k video and the astronauts had iPhones so they could have also shot some 4k videos if they wanted. It would have been really cool to see a video with the clouds moving over the Earth while the Moon passing in front.

Final Thoughts

Well that was a fun exposure adventure. Hope you all walked away with some interesting new thoughts. The “missing stars” in NASA photos aren’t a mystery, they’re a perfect real-world demonstration of how photography, exposure, and light work. When you understand the fundamentals, the explanation becomes simple: the Moon is incredibly bright due to direct sunlight, distant stars are extremely dim due to light falloff, and camera sensors, especially when using fast shutter speeds, can’t capture both extremes in a single exposure. This is why images from missions like Artemis II often show a sharply detailed Moon against a completely black sky. So the next time you see a Moon photo with no visible stars, you’ll know it’s not suspicious or staged, it’s actually a technically correct exposure based on the physics of light and the limitations of cameras. I think it’s pretty cool to be able to analyze these photos taken of the moon from a photographer’s perspective. Real or fake I know i’m excited to see more moon shots. If you’re into photography and want to learn more check out the rest of my blog and subscribe to my YouTube channel :)

FAQ

Why is space black in photos?

Because the camera is exposed for a bright subject (like the Moon or spacecraft), making dim stars invisible.

Can astronauts see stars with their eyes?

Yes, (I assume) but even then, visibility depends on lighting conditions and whether they’re in shadow.

Why don’t cameras capture what our eyes see?

Cameras have limited dynamic range. Human vision adapts in real time plus our brains 🧠 interpret the signals coming from our eyes to create what we see; cameras don’t.

Could NASA just adjust the exposure?

Not without ruining the main subject (the Moon). Exposure is always a trade-off. However, NASA could have shot images of just the stars without the Earth, Moon or Sun in the frame.

Why do astrophotographers see stars easily?

They use:

Long exposures

Tripods

Carefully controlled settings

Completely different shooting conditions.

Is there any situation where both stars and the Moon are visible?

Only with:

Multiple exposures

Image stacking

Or specific lighting conditions (like a dim crescent Moon)